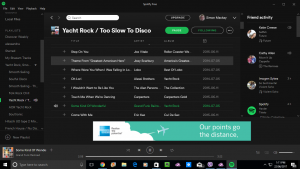

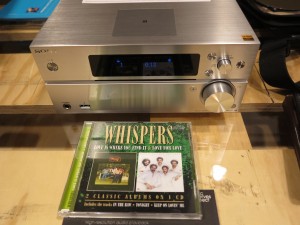

A strong direction for music streaming services is to offer CD-quality sound for all of their library at least

Apple, Amazon and Spotify are lining up or have lined up hi-fi-grade service tiers as part of their audio-streaming services. It is in response to Tidal and Deezer already offering this kind of sound quality for a long time along with the fear of other boutique audio-streaming services setting up shop and focusing on high-quality audio.

Now there is something interesting happening here regarding hi-fi-grade streaming. Here, Apple is having a CD-grade lossless-audio service as part of their standard premium subscription while making sure all music available to their Apple Music streaming service is CD-quality.

So how could these streaming music services compete effectively yet serve those of us who value high quality sound from those online music jukebox services that we use?

What are these hi-fi-grade digital audio services about?

This will be something that is expected of Spotify at least

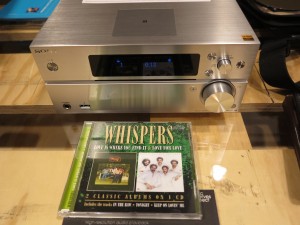

The hi-fi-grade service tiers typically offer a sound quality similar to that of a standard audio CD that you are playing on your CD player, with the same digital-audio specifications i.e. 44.1 kHz sampling rate and 16-bit samples representing stereo sound. Some of these services may offer some content at 48 kHz sampling rate that was specified for the original DAT audio tapes and may be used as a workflow standard for digital radio and TV.

In the same way that a regular audio CD stores the audio content in the original uncompressed PCM form, these hi-fi-grade streaming services use a lossless data-compression form similar to the FLAC audio filetype to transmit the sound while preserving the sound quality. That is equivalent to how a ZIP “file-of-files” works in compressing and binding together data from multiple files.

CD-grade digital audio was adopted during the late 1980s as the benchmark for high-quality sound reproduction in the consumer space. As well, the DAT tapes that recorded 16-bit PCM audio at 44.1 or 48 kHz sampling rates were considered the two-channel recording standard for project studios and similar professional audio content-creation workflows. It is although MiniDisc which used a lossy audio codec caught on in the UK and Japan for personal audio applications.

Some of these services offer extras like surround-sound or object-based audio soundmixes or supply the audio at “master-grade” specifications like 96 or 192 kHz sampling rates or 24 or more bits per sample. But these are best enjoyed on equipment that would properly reproduce the sound held therein to expectations. This is while most good audio equipment engineered since the 1970s was engineered to work capably with the audio CD as its pinnacle.

The provision of these hi-fi-grade services is having appeal thanks to telcos and ISPs offering increased bandwidth and data allowances for fixed and mobile broadband Internet services. This is more so in markets where there is increased competition for the customer’s fixed or mobile Internet service dollar.

As well, there is a highly-competitive market war going on between Bose, Apple and Sony at least for high-quality active-noise-cancelling Bluetooth headsets with the possibility of other headset manufacturers joining in this market war. This is something very close to the late-1970s Receiver Wars where hi-fi companies were vying with each other for the best hi-fi stereo receivers for one’s hi-fi system and increasing value for money in that product class.

Here, a streaming music service that befits these high-quality in-ear or over-the-head headsets could show what they are capable of when it comes to sound reproduction while on the road.

Let’s not forget that Apple and others are working on power-efficient hi-fi-grade digital-analogue-converter circuitry for laptops, tablets, smartphones and other portable audio endpoint devices. Then hi-fi-grade digital-analogue-conversion circuitry that connects to USB or Apple devices is being offered by nearly every hi-fi name under the sun whether as a separate box or as part of the functionality set that a hi-fi component or stereo system would offer.

Current limitations with enjoying hi-fi-grade audio on the move

There are limitations with this kind of service offering, especially with the use of Bluetooth Classic streaming to headphones or automotive infotainment setups from mobile devices. At the moment, it is being preferred that a wired connection, whether via a traditional analogue headphone cable or via an external digital-analogue converter box, is used to run the sound to a pair of good-quality headphones while “on the road”.

Similarly, Apple’s and Google’s smartphone-automotive-integration platforms need to be able to support use of these hi-fi-grade audio services properly so you can benefit from this class of sound when you are at the wheel of your car.

What could be done?

One step that can be taken by many music-streaming services is to create a service-level distinction between CD-quality stereo lossless audio service and create a higher-grade extra-cost audio services that focus on “master-grade” or multichannel soundmixes. Here, most of us like our music in stereo sound and see CD quality sound as the pinnacle with equipment engineered to that calibre. This is while the esoteric audiophiles would invest in equipment and services that can handle master-grade audio or multichannel soundmixes.

The music services could them move towards offering the CD-quality stereo lossless sound as the audio quality for the standard paid service subscription. That includes moving the service’s music library towards that kind of quality. The user would need to have the ability to enable and disable the CD-quality lossless stereo sound on a device-by-device basis perhaps to cater for smartphone use or limited bandwidth.

Where a music service offers transactional “download-to-own” music, the recordings could be offered at CD quality stereo as lossless files. There could be the ability to provide a complementary download of previously-purchased material as the CD-quality stereo lossless files.

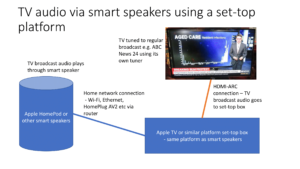

At the moment, there are a number of open-frame and proprietary paths that are able to use a home network to transmit CD-quality or master-quality lossless digital audio from a computing device or streaming audio service to audio endpoint devices within the home. But there needs to be more done to support mobile and portable setups where one is likely to hear audio files while out and about.

The Bluetooth SIG could investigate how CD-quality lossless audio can be sent wirelessly between devices using the various audio profiles that they oversee. This is more so as Bluetooth is used primarily to send multimedia audio from a smartphone or tablet to speakers, headphones or home and car audio equipment. Here, it could be based on their Bluetooth LE Audio specification which is being used to revise the Bluetooth multimedia audio use case effectively.

Similarly, the use of USB-C as a “digital audio path” from a computing device to an audio-output device needs to be encouraged. This would come in to its own with connecting to audio devices or systems that have highly-strung digital-analogue conversion circuitry which can come in to its own with high-quality audio streaming services.

In the automotive context, Apple CarPlay and Android Auto which are used to provide integrated smartphone-dashboard functionality could be improved to provide lossless audio transfer between the smartphone and the car’s infotainment system. This may be valued as a differentiator that can be applied to premium car-audio setups.

Once there are a list of standard protocols adopted for streaming lossless hi-fi grade stereo sound to headsets and automotive setups and that support wired and wireless connectivity, this could make proper CD-quality stereo sound more relevant on the road.

![German countryside - By Manfred&Barbara Aulbach (Own work) [CC-BY-SA-3.0 (http://creativecommons.org/licenses/by-sa/3.0) or GFDL (http://www.gnu.org/copyleft/fdl.html)], via Wikimedia Commons](https://homenetworking01.info/wp-content/uploads/2014/08/Feldweg_in_der_Nähe_von_Diemelstadt-300x199.jpg)