Amazon’s next generation of Echo devices to use edge computing

Articles

New Amazon Echo devices

Everything Amazon announced at its September event | Mashable

Amazon hardware event 2020: Everything the company announced today | Android Authority

Use of edge computing in new Echo devices

Amazon’s Alexa gets a new brain on Echo, becomes smarter via AI and aims for ambience | ZDNet

From the horse’s mouth

Amazon

Introducing the All-New Echo Family—Reimagined, Inside and Out (Press Release)

New Echo (Product Page with ordering opportunity)

New Echo Dot (Product Page with ordering opportunity)

New Echo Show 10 (Product Page with ordering opportunity)

My Comments

Amazon are premiering a new lineup of Echo smart-speaker and smart-display devices that work on the Alexa voice-driven home assistant platform.

These devices convey a lot of the aesthetics one would have seen in science-fiction material or “future living” material written from the 1950s to the 1970s. It is augmented by an indoor camera drone device that Amazon released around the same time.

As well, all of these devices have the spherical look that conveys that retro-futuristic industrial-design style that was put forward from the 1950s to the early 1970s like with the Hoover Constellation vacuum cleaner of the era or the Grundig Audiorama speakers that were initially designed in the 1970s thanks to the Space Race. You might as well even ask Alexa to pull up and play space-age bachelor-pad music from Spotify or Amazon Music through these speakers.

It is even augmented further with the base of the Echo and Echo Dot lighting up as a pin-stripe to indicate the device’s current status. This industrial design also permits the implementation of a 360-degree sound approach that can impress you a lot. It also is a smart-home hub that works with Zigbee, Bluetooth Low Energy and Amazon Sidewalk devices so you don’t need to use a separate hub for them.

Amazon Echo Dot Kids Edition that is available either as a panda or tiger – for the young or young at heart

The small Echo Dot comes in two different variants where one of these has a clock on the front while the other doesn’t. It also comes also as a “Kids’ Edition” with an option of a panda face or a cat face. It is offered as part of Amazon’s Alexa Kids program which provides a child-optimised package of features for this voice assistant. But I also wonder whether this can be ran as a regular Echo Dot device, which may appeal to those adults who are young at heart and want that mischievous look these devices have.

The Echo Show 10 smart display uses a microphone array and automatic panning to face the user. This is driven by machine vision driven by the camera and microphone array. But the camera has a shutter for your privacy. Of course you can use this device as a videophone thanks to the Alexa platform’s support for Amazon’s calling platforms, Zoom and Skype.

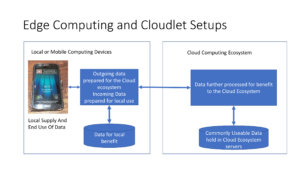

What makes this generation of Echo devices more interesting is that they implement an edge-computing approach to improve sound quality and intelligibility when it comes to interacting with Alexa. This is even opening up ideas like natural-flow conversations with Alexa or allowing Alexa to participate as an extra person in a multiple-person conversation. It is furthering Amazon’s direction towards implementing ambient computing on their Alexa voice-driven assistant platform.

But Google was the first to implement this concept in a smart-speaker / voice-driven assistant use case. Here, they used it in the Nest Mini smart speaker to improve on the Google Assistant’s intelligibility of your commands.

I do see this as a major direction for smart-speaker and voice-driven-assistant technology due to improving responsiveness and user interaction with these devices. It may even be about keeping premises-local configurations and customisations on the device’s memory rather than on the cloud, which may improve a lot of use factors. For example, it may be about user privacy due to minimal user data held on remote servers. Or it could be about an optimised highly-responsive setup for the home-automation setups we build around these devices.

What needs to be looked at is a way to implement localised peer-to-peer sharing of data between smart speaker devices that are on the same platform and are installed within the same home network. This can allow users to have the same quality of experience no matter which device they use within the home.

There also has to be support for localised processing of data by devices with the edge-computing smarts for those devices that don’t have that kind of operation. This would be important if you bring in a newer device with this functionality and effectively “push down” the existing device to a secondary usage scenario. In this use case, having another device with the edge computing smarts on your home network and bound to your voice-driven-assistant platform account could assure the same kind of smooth user experience as using the new device directly.

These Amazon Echo devices are showing a new direction for voice-driven home assistant devices to allow for improved intelligibility and smoother operation.