Traditional TV and radio could be delivered via the same means as the Internet

A direction that we are expecting to see for broadcast radio and TV technology is to stream it via Internet-based technologies but assure users of a similar experience to how they have received content delivered this way.

It is about being able to use the agile wired and wireless Internet technologies like 5G mobile broadband, fibre-to-the-premises, fixed-wireless broadband; and Ethernet and Wi-Fi wireless local area networks to deliver this kind of content.

What is the goal here

The goal here is to provide traditional broadcast radio and TV service through wired or wireless broadband-service-delivery infrastructure in addition to or in lieu of dedicated radio-frequency-based infrastructure.

The traditional radio-frequency approach uses specific RF technologies like FM, DAB+, DVB and ATSC to deliver audio or video content to radio and TV receivers. This can be terrestrial to a rooftop, indoor or set-attached antenna referred to in the UK and most Commonwealth countries as an aerial; via a cable system through a building, campus or community; or via a satellite where it is received using special antennas like satellite dishes.

The typical Internet-Protocol network used for Internet service uses different transport media, whether that be wired or wireless. It can be mobile broadband receivable using a mobile phone; a fixed setup like fibre-to-the-premises, fixed wireless or fibre-copper setups. As well, such networks typically include a local-area network covering a premises or building that is based on Ethernet, Wi-Fi wireless, HomePlug or G.Hn powerline, or similar technologies.

The desireable user experience

It will maintain the traditional remote-control experience like channel surfing

It also is about providing a basic setup and use experience equivalent to what is expected for receiving broadcast radio and TV service using digital RF technologies. This includes “scanning” the wavebands for stations to build up a station directory of what’s available locally as part of setting up the equipment; using up/down buttons to change between stations or channels; keying in “channel numbers” in to a keypad to select TV channels according to a traditional and easy-to-remember channel numbering approach; using a “last-channel” button to flip between two different programmes you are interested in; and allocating regularly-listened-to stations to preset buttons so you have them available at a moment’s notice.

This has been extended to a richer user experience for broadcast content in many ways. For TV, it has extended to a grid-like electronic programme guide which lists what is showing now or will be shown in the coming week on all of the channels so you can switch to a show that you like to watch or have that show recorded. For radio, it has been about showing more details about what you are listening to like the name of that song you are listening to for example. Even ideas like prioritising or recording the news or traffic announcements that matter or selecting content by type has also become another desireable part of the broadcast user experience.

Relevance of traditional linear broadcasting today

There are people who cast doubt on the relevance of traditional linear broadcast media and its associated experiences in this day and age.

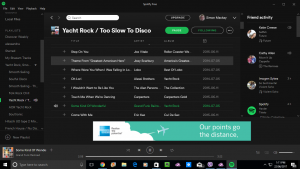

This is brought about through the use of podcasts, Spotify-like audio streaming services, video-on-demand services and the like who can offer a wider choice of content than traditional broadcast media.

But some user classes and situations place value upon the traditional broadcast media experience. Firstly, Generation X and prior generations have grown up with broadcast media as part of their life thanks to affordable sets with a common user experience and an increasing number of stations or channels being available. Here, these users are often resorting to broadcast media for casual viewing and listening with a significant number of these users recording broadcast material to enjoy again on their own terms.

Then there is the reliance on traditional broadcast media for news and sport. This is due to the ability to receive up-to-date facts without needing to do much. Let’s not forget that some users rely on this media experience for discovery of content curated by someone else like staff at a TV channel or a radio station rather than an online service’s content-recommendation engine. Even the on-air talent is valued by a significant number of listeners or viewers as personalities in their own right because of how they present themselves on radio or TV.

Access without traditional radio-frequency infrastructure

DVB-I and allied technologies may reduce reliance on RF infrastructure like TV aerials or satellite dishes

One of these goals here is to allow access to traditional broadcast radio and TV without being dependent on particular radio-frequency infrastructure types and reception conditions. This can encompass someone to offer a linear broadcast service with all the trappings of that service but not needing to have access to RF-based broadcast technologies like a transmitter.

To some extent, it could be a method to use the likes of SpaceX Starlink or 5G mobile broadband to deliver radio and TV service to rural and remote areas. This could come in to its own where the goal is to provide the full complement of broadcasting services to these areas.

It also is encompassing a situation happening with cable-TV networks in some countries where these networks are being repurposed purely for cable-modem Internet service. As well, some neighbourhoods don’t take kindly to satellite dishes popping up on the roofs or walls of houses, seeing them as a blight. Here, multi-channel pay-TV operators have had to consider using Internet-based delivery methods to bring their services to potential customers without facing these risks.

Or a mobile platform tablet could run software to pick up TV broadcasts via the Internet

Let’s not forget that IP-based data networks are being seen as a way to extend the reach of traditional broadcast services in to parts of a building that don’t have ready access to a reliable RF signal or traditional RF infrastructure. This may be due to it being seen as costly or otherwise prohibitive to extend a master-antenna TV setup to a particular area or to install a satellite dish, TV aerial or cable-TV connection to a particular house.

In the portable realm, it extends especially to smartphones or mobile-platform tablets even where these devices may have a broadcast-radio or TV tuner. But broadcast reception using these tuners only becomes useful if you plug a wired headset in to the mobile device’s headset jack, because of a long-standing design practice with Walkman-type personal radio devices where the headset cable is the device’s FM or DAB+ antenna. Here, the smartphone could use mobile broadband or Wi-Fi for broadcast-radio reception if you use its speaker or a Bluetooth headset to listen to the radio.

Complementing traditional radio infrastructure

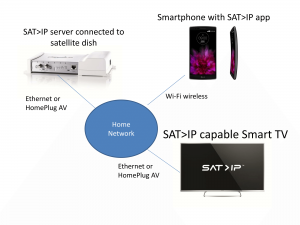

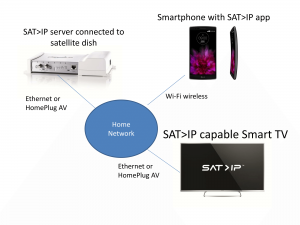

What SAT>IP is about with satellite TV – broadcast-LAN content distribution

In the same context, it is also being considered as a different approach to providing “broadcast-to-LAN” services where broadcast signals are received from radio infrastructure via a tuner-server device and streamed in to a local-area network. This could allow the client device to choose the best source available for a particular channel or station.

But even the “broadcast-to-LAN” approach can be improved upon by providing an equivalent user experience to a traditional RF-based broadcast setup. It would benefit buildings or campuses with a traditional aerial or satellite dish installed at the most optimum location but use Ethernet cabling, Wi-Fi wireless or similar technologies including a mixture of such technologies to distribute the broadcast signal around the development.

As well, some of these setups may be about mixing the traditional broadcast channels and IP-delivered content in to a form that can be received with that traditional broadcast user experience. Or it can be about seamlessly switching between a fully-Internet-delivered source and the broadcast stream provided by a broadcast-LAN server to the local network that is providing Internet service. This can cater towards broadcast-LAN setups based around devices that don’t have enough capacity to serve many broadcast streams.

Pure Sensia 200D Connect Internet radio – an example bringing broadcast radio via RF and Internet means

Even a radio or TV device could maintain a traditional user-experience while content is delivered over both traditional RF infrastructure and Internet-based infrastructure. This could range from managing a situation where an alternative content stream is offered via the Internet while the main content is offered via the station’s traditional RF means. Or it could be about independent broadcast content being broadcast without the need to have access to RF infrastructure or spectrum.

Similarly, some digital-broadcast operators are wanting to implement networks typically used for Internet service delivery as a backhaul between a broadcaster’s studios and the transmitter. Here, it is seen as a cost-effective approach due to a reduced need to create an expensive pure-play wired or wireless link to the transmitter. Rather they can rely on a business-grade Internet service with guaranteed service quality standards for this purpose.

Even a master-antenna system that is set up to provide a building’s or development’s occupants access to broadcast content via RF coaxial-cable infrastructure could benefit this way. This could be about repackaging broadcasters’ content from Internet-based links offered by the broadcasters in to a form deliverable over the system’s RF cable infrastructure rather than an antenna or satellite dish to bring radio and TV to that system. It could be also seen as a way to insert extra content for that development through this system such as a health TV channel for hospitals or a tourist-information TV channel for hotels.

How is this approach being taken

Here, a broadcast-ready linear content stream or a collection of such streams that would be normally packaged for a radio-frequency transport is repackaged for a data network working to IP-compliant standards. This can be done in addition to packaging that content stream for one or more radio-frequency transports.

This approach is built on the idea of the ISO OSI model of network architecture where top-level classes of protocols can work on many different bottom-level transports, with this concept being applied to broadcast radio and TV.

The IP-based network / Internet transport approach can allow for a minimal effort approach to repackaging the broadcast stream or stream collection to an RF transport. A use case that this would apply to is using a business-standard Internet service as a backhaul for delivering radio or TV service to multiple transmitters.

It is different from the Internet-radio or “TV via app” approach where there is a collection of broadcasters streamed via Internet means. But these setups rely primarily on online content directories operated by the broadcasters themselves or third parties like TuneIn Radio or Airable.net. These setups don’t typically offer broadcast-like user experiences like channel-surfing or traditional channel-number entry.

At the moment, the DVB Group who have effectively defined the standards for digital TV in Europe, Asia, most of Africa, and Oceania have worked on this approach through the use of DVB-I (previous coverage on this site) and allied standards for television. This is in addition to the DVB Home Broadcast (DVB-HB) standard released in February this year to build upon SAT-IP towards a standardised broadcast-to-LAN setup no matter the RF bearer.

Similarly, the EBU have worked on the HRADIO project to apply this concept to DAB+ digital radio used for radio services in Europe and Oceania at least.

Another advantage that is also being seen is the ability for someone to get “on the air” without needing to have access to radio-frequency spectrum or be accepted by a cable-TV or satellite-TV network. This may appeal to international broadcasters or to those offering niche content that isn’t accepted by the broadcast establishment of a country.

What is it also leading to

This is leading towards hybrid broadcast and broadband content-delivery approaches. That is where content from the same broadcaster is delivered by RF and Internet means with the end user using the same user experience to select the online or RF-broadcast content.

One use case is to gain access to supplementary content from that broadcast via the Internet no matter whether the viewer or listener enjoys the broadcaster through an RF-based means or through the Internet. This could be prior episodes of the same show or further information about a concept put forward in an editorial program or a product advertised on a commercial.

For radio, this would be about showing up-to-date station branding alongside show names and presenter images. If the show is informational, there would be rich visual information like maps, charts, bullet lists and the like to augment the spoken information.

If it is about music, you would see reference to the title and artist of what’s playing perhaps with album cover art and artist images. For classical music where people think primarily of a work composed by a particular composer, this may be about the composer and the work, perhaps with a reference to the currently-playing movement. Operas and other musical theatre may have the libretti being shown in real time to the performance.

In all music-related cases, there may be the ability to “find out more” on the music and who is behind it or even to buy a recording of that music, whether as physical media like an LP record or CD, or as a download-to-own file.

For TV content, this would be about a rich experience for sports, news, reality and similar shows. For example, the Seven Network created an improved interactive experience for the 2021 Tokyo Olympics and Paralympics by using 7Plus to provide direct access to particular sports types during the Games. A true hybrid setup on equipment with a broadcast tuner would allow a user to select Channel 7 or 7Mate for standard broadcast feeds using the 7Plus user experience with the broadcast feeds supplied by the broadcast tuner or the Internet stream depending on the signal quality.

Issues to consider

There are issues that will be raised where broadcast radio and TV are delivered over Internet infrastructure with the goal of a broadcast-like user experience.

One of these is to assure users don’t pay extra costs for this kind of reception compared to delivery by RF-based means. Here, these Internet-based broadcast setups would have to be “zero-rated” so that users don’t incur data costs on metered Internet services like mobile broadband. Add to this a common issue with rural areas where Internet service quality wouldn’t be reliable enough to provide the same kind of user experience as traditional RF-based broadcast reception.

As well, broadband infrastructure providers would need to assure transparent access to Internet-based broadcast setups so that users have access to standard broadcasters without being dependent on service from particular retail ISPs or mobile carriers. It may also be about making sure that one can receive broadcast content with the broadcast user experience anywhere in a typical local network.

Another factor to be considered as far as DVB-I or similar technologies are concerned is whether this impacts on content providers’ liabilities regarding broadcast rights for music and sports content. Here, some sports leagues or music copyright collection bodies consider Internet-based distribution as different from traditional broadcast media and add extra requirements on this distribution approach.

It can be about availability of content beyond the broadcaster’s home country, in a manner to contravene a blackout requirement or to provide a competing source of availability to the one who has exclusive rights for that territory. It is also similar to “grey-importing” of music rather than acquiring it through official distribution channels, that also leads to bringing in content not normally available in a particular country.

These issues may be answered through a framework of various legal protections and universal-service obligations associated with providing free-to-air broadcast content. It would be driven more so by countries who have a strong public-service and/or commercial free-to-air broadcast lobby.

Conclusion

Internet-based technologies are effectively being seen as a way to extend the reach of or improve upon the broadcast-media experience without detracting from its familiar interaction approaches. This is thanks to research in to technologies that are about repackaging broadcast signals for an RF transport in a manner for Internet use.