An iPhone or iPad running iOS 11 has native support for the HEIF image file format

A new image file format has started to surface over the last few years but it is based on a different approach to storing image files.

This file format, known as the HEIF or High Efficiency Image Format, is designed and managed by the MPEG group who have defined a lot of commonly-used video formats. It is being seen by some as the “still-image version of HEVC” with HEVC being the video codec used for 4K UHDTV video files. But it uses HEVC as the preferred codec for image files and will provide support for newer and better image codecs, including newer codecs offering lossless image compression in a similar vein to what FLAC offers for audio files.

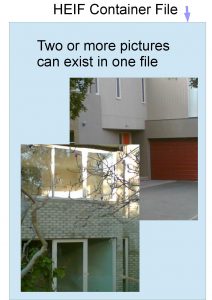

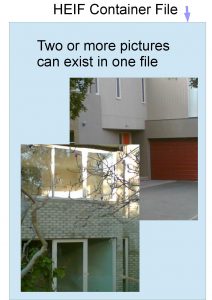

Unlike JPEG and the other image files that have existed before it, HEIF is seen as a “container file” for multiple image and other objects rather than just one image file and some associated metadata. As well, the HEIF file format and the HEVC codec are designed to take advantage of today’s powerful computing hardware integrated in our devices.

The primary rule here for HEIF is that it is a container file format speci

Simple concept view of the HEIF image file format

fically for collections of still images. It is not really about replacing one of the video container file formats like MP4 or MKV which are specifically used primarily for video footage.

What will this mean?

One HEIF file could contain a collection of image files such as “mapping images” to improve image playback in certain situations. It can also contain the images taken during a “burst” shot where the camera takes a rapid sequence of images. This can also apply with image bracketing where you take a sequence of shots at different exposure, focus or other camera settings to identify an ideal image setup or create an advanced composite photograph.

This leads to HEIF dumping GIF as the carrier for animated images that are often provided on the Web. Here, you could use software to identify a sequence of images to be played like a video, including having them repeat in a loop thanks to the addition of in-file metadata. This emulates what the Apple Live Photos function was about with iOS and can allow users to create high-quality animations, cinemagraphs (still photos with a small discreet looping animation) or slide-shows in the one HEIF file.

HEIF uses the same codec associated with 4K UHDTV for digital photos

There is also the ability to store non-image objects like text, audio or video in an HEIF file along with the images which can lead to a lot of things. For example, you could set a smartphone to take a still and short video clip at the same time like with Apple Live Photos or you could have images with text or audio notes. On the other hand, you could attach “stamps”, text and emoji to a series of photos that will be sent as an email or message like what is often done with the “stories” features in some of the social networks. In some ways it could be seen as a way to package vector-graphics images with a “compatibility” or “preview” bitmap image.

The HEIF format will also support non-destructive metadata-based editing where this editing is carried out using metadata that describes rectangular crops or image rotations. This is so you could revise an edit at a later time or obtain another edit from the same master image.

It also leads to the use of “derived images” which are the results of one of these edits or image compositions like an HDR or stitched-together panorama image. These can be generated at the time the file is opened or can be created by the editing or image management software and inserted in the HEIF file with the original images. Such a concept could also extend to the rendering and creation of a video object that is inserted in the HEIF file.

HEIF makes better use of advanced photo options offered by advanced cameras

Here, having a derived image or video inserted in the HEIF file can benefit situations where the playback or server setup doesn’t have enough computing power to create an edit or composition of acceptable quality in an acceptable timeframe. Similarly, it could answer situations where the software used either at the production/editing, serving or playback devices does a superlative job of rendering the edits or compositions.

The file format even provides alternative viewing options for the same resource. For example, a user could see a wide-angle panorama image based on a series of shots as either a wide-aspect-ration image or a looping video sequence representing the camera panning across the scene.

What kind of support exists for the HEIF format?

At the moment, Apple’s iOS 11, tvOS 11 (Apple TV) and MacOS High Sierra provide native support for the HEIF format. The new iPhone and iPad provide hardware-level support for the HEVC codec that is part of this format and the iOS 11 platform along with the iCloud service provides inherent exporting of these images for email and other services not implementing this format.

Microsoft is intending to integrate HEIF in to Windows 10 from the Spring Creators Update onwards. As well, Google is intending to bake it in to the “P” version of Android which is their next feature version of that mobile platform.

As for dedicated devices like digital cameras, TVs and printers; there isn’t any native support for HEIF due to it being a relatively new image format. But most likely this will come about through newer devices or devices acquiring newer software.

Let’s not forget that newer image player and editing / management software that is continually maintained will be able to work with these files. The various online services like Dropbox, Apple iCloud or Facebook are or will be offering differing levels of HEIF image-file support the the evolution of their platform. Depending on the service, this will be to hold the files “as-is” or to export and display them in a “best-case” format.

There will be some compatibility issues with hardware and software that doesn’t support this format. This may be rectified with operating systems, image editing / management software or online services that offer HEIF file-conversion abilities. Such software will need to export particular images or derivative images in an HEIF file as a JPEG image for stills or a GIF, MP4 or QuickTime MOV file for timed sequences, animations and similar material.

In the context of DLNA-based media servers, it may be about a similar approach to an online service where the media server has to be able to export original or derived images from one of these files held on a NAS as a JPEG still image or a GIF or MP4 video file where appropriate.

Conclusion

As the container-based HEIF image format comes on to the scene as the possible replacement for JPEG and GIF image files, it could be about an image file format that shows promise for both the snapshooter or the advanced photographer.